A HEIGHTENED FOCUS ON RESPONSE AND RECOVERY

Over a third of directors of US public companies now discuss cybersecurity at every board meeting. Cyber risks are being driven onto the agenda by

- high-profile data breaches,

- distributed denial of services (DDoS) attacks,

- and rising ransomware and cyber extortion attacks.

The concern about cyber risks is justified. The annual economic cost of cyber-crime is estimated at US$1.5 trillion and only about 15% of that loss is currently covered by insurance.

MMC Global Risk Center conducted research and interviews with directors from WCD to understand the scope and depth of cyber risk management discussions in the boardroom. The risk of cyberattack is a constantly evolving threat and the interviews highlighted the rising focus on resilience and recovery in boardroom cyber discussions. Approaches to cyber risks are maturing as organizations recognize them as an enterprise business risk, not just an information technology (IT) problem.

However, board focus varies significantly across industries, geographies, organization size and regulatory context. For example, business executives ranked cyberattacks among the top five risks of doing business in the Asia Pacific region but Asian organizations take 1.7 times longer than the global median to discover a breach and spend on average 47% less on information security than North American firms.

REGULATION ON THE RISE

Tightening regulatory requirements for cybersecurity and breach notification across the globe such as

- the EU GDPR,

- China’s new Cyber Security Law,

- and Australia’s Privacy Amendment,

are also propelling cyber onto the board agenda. Most recently, in February 2018, the USA’s Securities and Exchange Commission (SEC) provided interpretive guidance to assist public companies in preparing disclosures about cybersecurity risks and incidents.

Regulations relating to transparency and notifications around cyber breaches drive greater discussion and awareness of cyber risks. Industries such as

- financial services,

- telecommunications

- and utilities,

are subject to a large number of cyberattacks on a daily basis and have stringent regulatory requirements for cybersecurity.

Kris Manos, Director, KeyCorp, Columbia Forest Products, and Dexter Apache Holdings, observed, “The manufacturing sector is less advanced in addressing cyber threats; the NotPetya and WannaCry attacks flagged that sector’s vulnerability and has led to a greater focus in the boardroom.” For example, the virus forced a transportation company to shut down all of its communications with customers and also within the company. It took several weeks before business was back to normal, and the loss of business was estimated to have been as high as US$300 million. Overall, it is estimated that as a result of supply chain disruptions, consumer goods manufacturers, transport and logistics companies, pharmaceutical firms and utilities reportedly suffered, in aggregate, over US$1 billion in economic losses from the NotPetya attacks. Also, as Cristina Finocchi Mahne, Director, Inwit, Italiaonline, Banco Desio, Natuzzi and Trevi Group, noted, “The focus on cyber can vary across industries depending also on their perception of their own clients’ concerns regarding privacy and data breaches.”

LESSONS LEARNED: UPDATE RESPONSE PLANS AND EVALUATE THIRD-PARTY RISK

The high-profile cyberattacks in 2017, along with new and evolving ransomware onslaughts, were learning events for many organizations. Lessons included the need to establish relationships with organizations that can assist in the event of a cyberattack, such as l

- aw enforcement,

- regulatory agencies and recovery service providers

- including forensic accountants and crisis management firms.

Many boards need to increase their focus on their organization’s cyber incident response plans. A recent global survey found that only 30% of companies have a cyber response plan and a survey by the National Association of Corporate Directors (NACD) suggests that only 60% of boards have reviewed their breach response plan over the past 12 months. Kris Manos noted, “[If an attack occurs,] it’s important to be able to quickly access a response plan. This also helps demonstrate that the organization was prepared to respond effectively.”

Experienced directors emphasized the need for effective response plans alongside robust cyber risk mitigation programs to ensure resilience, as well as operational and reputation recovery. As Jan Babiak, Director, Walgreens Boots Alliance, Euromoney Institutional Investor, and Bank of Montreal, stressed, “The importance of the ’respond and recover’ phase cannot be overstated, and this focus needs to rapidly improve.”

Directors need to review how the organization will communicate and report breaches. Response plans should include preliminary drafts of communications to all stakeholders including customers, suppliers, regulators, employees, the board, shareholders, and even the general public. The plan should also consider legal requirements around timelines to report breaches so the organization is not hit with financial penalties that can add to an already expensive and reputationally damaging situation. Finally, the response plan also needs to consider that normal methods of communication (websites, email, etc.) may be casualties of the breach. A cyber response plan housed only on the corporate network may be of little use in a ransomware attack.

Other lessons included the need to focus on cyber risks posed by third-party suppliers, vendors and other impacts throughout the supply chain. Shirley Daniel, Director, American Savings Bank, and Pacific Asian Management Institute, noted, “Such events highlight vulnerability beyond your organization’s control and are raising the focus on IT security throughout the supply chain.” Survey data suggests that about a third of organizations do not assess the cyber risk of vendors and suppliers. This is a critical area of focus as third-party service providers (e.g., software providers, cloud services providers, etc.) are increasingly embedded in value chains.

FRUSTRATIONS WITH OVERSIGHT

Most directors expressed frustrations and challenges with cyber risk oversight even though the topic is frequently on meeting agendas. Part of the challenge is that director-level cyber experts are thin on the ground; most boards have only one individual serving as the “tech” or “cyber” person. A Spencer Stuart survey found that 41% of respondents said their board had at least one director with cyber expertise, with an additional 7% who are in the process of recruiting one. Boards would benefit from the addition of experienced individuals who can identify the connections between cybersecurity and overall company strategy.

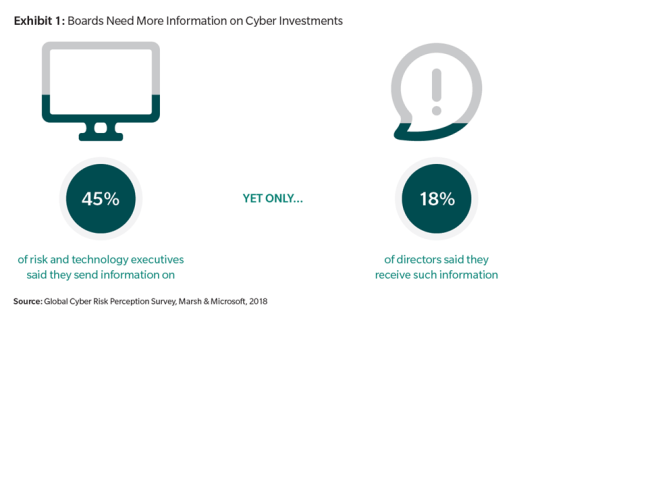

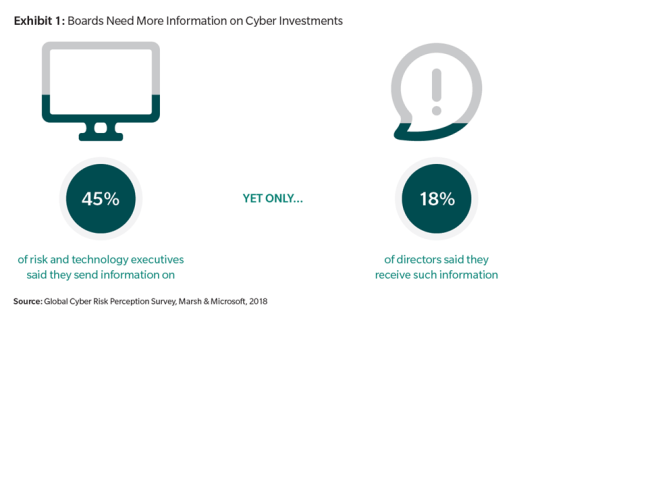

A crucial additional challenge is obtaining clarity on the organization’s overall cyber risk management framework. (See Exhibit 1: Boards Need More Information on Cyber Investments.) Olga Botero, Director, Evertec, Inc., and Founding Partner, C&S Customers and Strategy, observed, “There are still many questions unanswered for boards, including:

- How good is our security program?

- How do we compare to peers?

There is a big lack of benchmarking on practices.” Anastassia Lauterbach, Director, Dun & Bradstreet, and member of Evolution Partners Advisory Board, summarized it well, “Boards need a set of KPIs for cybersecurity highlighting their company’s

- unique business model,

- legacy IT,

- supplier and partner relationships,

- and geographical scope.”

Nearly a quarter of boards are dissatisfied with the quality of management-provided information related to cybersecurity because of insufficient transparency, inability to benchmark and difficulty of interpretation.

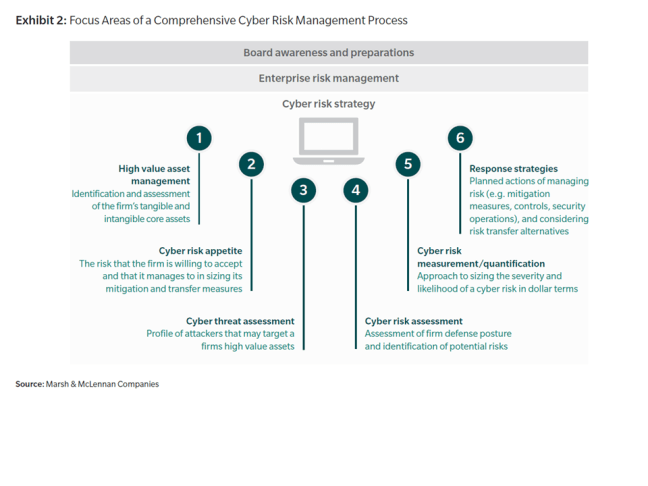

EFFECTIVE OVERSIGHT IS BUILT ON A COMPREHENSIVE CYBER RISK MANAGEMENT FRAMEWORK

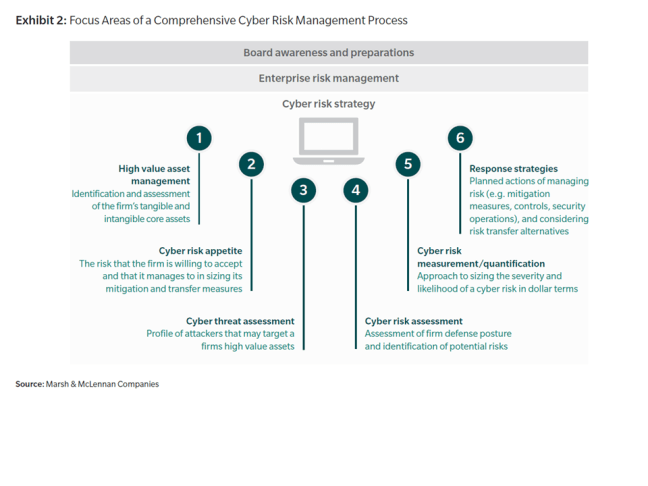

Organizations are maturing from a “harden the shell” approach to a protocol based on understanding and protecting core assets and optimizing resources. This includes the application of risk disciplines to assess and manage risk, including quantification and analytics. (See Exhibit 2: Focus Areas of a Comprehensive Cyber Risk Management Framework.) Quantification shifts the conversation from a technical discussion about threat vectors and system vulnerabilities to one focused on maximizing the return on an organization’s cyber spending and lowering its total cost of risk.

Directors also emphasized the need to embed the process in an overall cyber risk management framework and culture. “The culture must emphasize openness and learning from mistakes. Culture and cyber risk oversight go hand in hand,” said Anastassia Lauterbach. Employees should be encouraged to flag and highlight potential cyber incidents, such as phishing attacks, as every employee plays a vital role in cyber risk management. Jan Babiak noted, “If every person in the organization doesn’t view themselves as a human firewall, you have a soft underbelly.” Mary Beth Vitale, Director, GEHA and CoBiz Financial, Inc., also noted, “Much of cyber risk mitigation is related to good housekeeping such as timely patching of servers and ongoing employee training and alertness.”

Boards also need to be alert. “Our board undertakes the same cybersecurity training as employees,” noted Wendy Webb, Director, ABM Industries. Other boards are putting cyber updates and visits to security centers on board “offsite” agendas.

THE ROLE OF CYBER INSURANCE

Although the perception of many directors is that cyber insurance provides for limited coverage, the insurance is increasingly viewed as an important component of a cyber risk management framework and can support response and recovery plans. Echoing this sentiment, Geeta Mathur, Director, Motherson Sumi Ltd, IIFL Holdings Ltd, and Tata Communication Transformation Services Ltd., commented, « There is a lack of information and discussion on risk transfer options at the board level. The perception is that it doesn’t cover much particularly relating to business interruption on account of cyber threats.” Cristina Finocchi Mahne also noted, “Currently, management teams may not have a positive awareness of cyber insurance, but we expect this to rapidly evolve over the short-term.”

Insurance does not release the board or management from the development and execution of a robust risk management plan but it can provide a financial safeguard against costs associated with a cyber event. Cyber insurance coverage should be considered in the context of an overall cyber risk management process and cyber risk appetite.

With a robust analysis, the organization can

- quantify the price of cyber risk,

- develop effective risk mitigation,

- transfer and risk financing strategy,

- and decide if – and how much – cyber insurance to purchase.

This allows the board to have a robust conversation on the relationship between risk, reward and the cost of mitigation and can also prompt an evaluation of potential consequences by using statistical modeling to assess different damage scenarios.

CYBER INSURANCE ADOPTION IS INCREASING

The role of insurance in enhancing cyber resilience is increasingly being recognized by policymakers around the world, and the Organisation of Economic Co-operation and Development (OECD) is recommending actions to stimulate cyber insurance adoption.

Globally, it is expected the level of future demand for cyber insurance will depend on the frequency of high-profile cyber incidents as well as the evolving legislative and regulatory environment for privacy protections in many countries. In India, for example, there was a 50% increase in companies buying cybersecurity coverage 2016 to 2017. Research suggests that only 40% of US boards have reviewed their organization’s cyber insurance coverage in the past 12 months.

LIMITING FINANCIAL LOSSES

In the event of a debilitating attack, cyber insurance and associated services can limit an organization’s financial damage from direct and indirect costs and help accelerate its recovery. (See Exhibit 3: Direct and Indirect Costs Associated with a Cyber Attack.) For example, as a result of the NotPetya attack, one global company reported a decline in operating margins and income, with losses in excess of US$500 million in the last fiscal year. The company noted the costs were driven by

- investments in enhanced systems in order to prevent future attacks;

- cost of incentives offered to customers to restore confidence and maintain business relationships;

- additional costs due to claims for service failures; costs associated with data breach or data loss due to third-parties;

- and “other consequences of which we are not currently aware but may subsequently discover.”

Indeed, the very process of assessing and purchasing cyber insurance can bolster cyber resilience by creating important incentives that drive behavioral change, including:

- Raising awareness inside the organization on the importance of information security.

- Fostering a broader dialogue among the cyber risk stakeholders within an organization.

- Generating an organization-wide approach to ongoing cyber risk management by all aspects of the organization.

- Assessing the strength of cyber defenses, particularly amid a rapidly changing cyber environment.

Click here to access Marsh’s and WCD’s detailed report